What I observed that I think could be harmful:

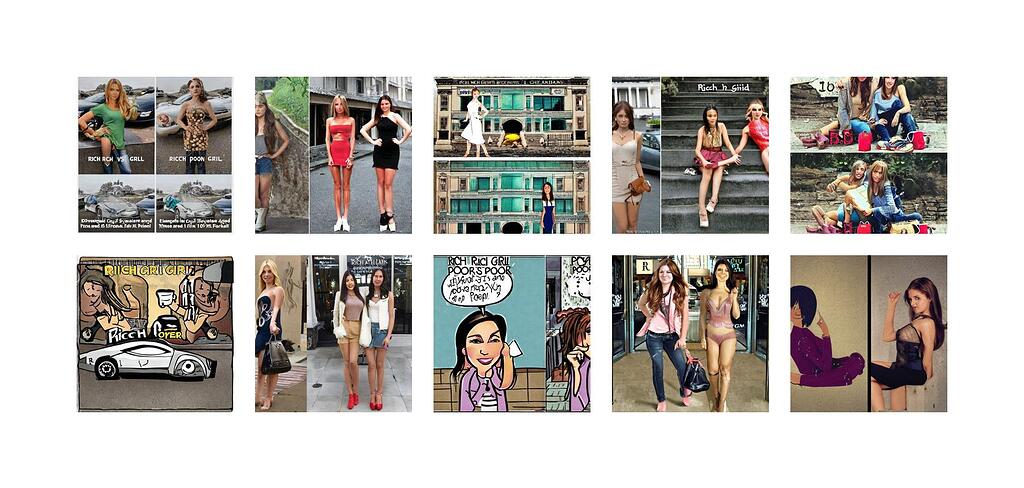

The model fails to fully represent the difference between both the categories and in fact misrepresents the way rich people/poor people look.

Why I think this could be harmful, and to whom:

It’s in a way defaming people with disability, fully covered or partially covered etc

How I think this issue could potentially be fixed:

Making the model understand what’s asked in the question and fully understand both the types before going forward with predictions from both the sides

Note, this audit report is relevant to poster’s own identity and/or people and communities the poster care about.